Today we demonstrate SHARK targeting Apple’s 32 Core GPU in the M1Max with PyTorch Models for BERT Inference and Training. In the past we demonstrated better codegen than Intel MKL and Apache/OctoML TVM on Intel Alderlake CPUs and outperforming Nvidia’s cuDNN/cuBLAS/CUTLASS used by ML frameworks such as Onnxruntime, Pytorch/Torchscript and Tensorflow/XLA.

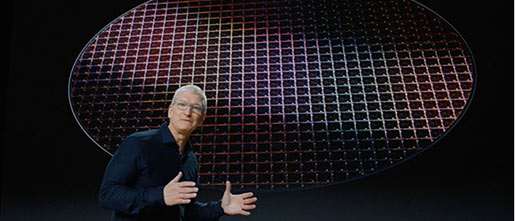

Nod.ai has added AMD GPU support to be able to retarget the code generation for AMD MI100/MI200 class devices and move machine learning workloads from Nvidia V100/A100 to AMD MI100 seamlessly. Since SHARK generates kernels on the fly for each workload you can port to new architectures like the M1Max without the vendor provided handwritten / hand tuned library. SHARK is built on MLIR and IREE and can target various hardware seamlessly. Though Apple has released GPU support for Tensorflow via the now deprecated tensorflow-macos plugin and the newer tensorflow-metal plugin the most popular machine learning framework PyTorch lacks GPU support on Apple Silicon.

In this blog we demonstrate PyTorch Training and Inference on the Apple M1Max GPU with SHARK with only a few lines of additional code and outperforming Apple’s Tensorflow-metal plugin. SHARK is a portable High Performance Machine Learning Runtime for PyTorch.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed